GSA SER Link Lists

Understanding GSA SER Link Lists

In the world of automated link building, few tools match the raw versatility of GSA Search Engine Ranker. At the core of its functionality lies a crucial component that often determines the success or failure of a campaign: the curated collection of target URLs known as GSA SER link lists. These lists act as the highway system for the tool, directing it to the precise locations where backlinks can be constructed, verified, or leveraged for secondary tiers.

What Exactly Are GSA SER Link Lists?

A GSA SER link list is simply a text file, spreadsheet, or exported database containing URLs that the software can parse and use. While the application ships with basic internal engines capable of scraping the web for targets, serious users quickly discover that manually compiled or purchased lists dramatically improve efficiency. Each line in a high-quality list represents an opportunity: a forum profile, a blog comment form, a web 2.0 signup, an indexer endpoint, or a pre-identified guestbook. Without robust GSA SER link lists, the tool would waste countless hours crawling irrelevant domains and hitting dead ends.

Types of Links Commonly Found

Not all URLs are equal, and experienced practitioners segment their GSA SER link lists according to the engine type. You might have a dedicated list for article directories, another for social network profiles, and yet another for trackbacks. The most effective setups include contextual platforms where content can be placed, as these pass more potent signals than generic comment footprints. A properly organized collection of GSA SER link lists can be the difference between a penalty and a sustained ranking boost.

Why Quality Trumps Quantity Every Time

The golden age of spraying links to millions of auto-generated pages is over. Search engines now penalize patterns associated with low-effort spam, which means your GSA SER link lists need rigorous filtering. A list of 10,000 hand-tested do-follow forums will outperform a list of 1 million unverified URLs scraped from public sources. Clean lists reduce the server load on your VPS, keep the software's retry queues short, and most importantly, minimize the footprint that can trigger manual reviews.

Verification and Upkeep

Maintaining fresh GSA SER link lists is a continuous task. Platforms close, spam protections get stronger, and domains get deindexed. Smart users run daily checks using the built-in filters to remove URLs that return bad HTTP status codes or show no viable form fields. Over time, the list shrinks but becomes razor-sharp. Some advertisers sell premium GSA SER link lists that are updated monthly, often including niche-specific targets that cannot be found through general scraping.

How to Source Your Targets

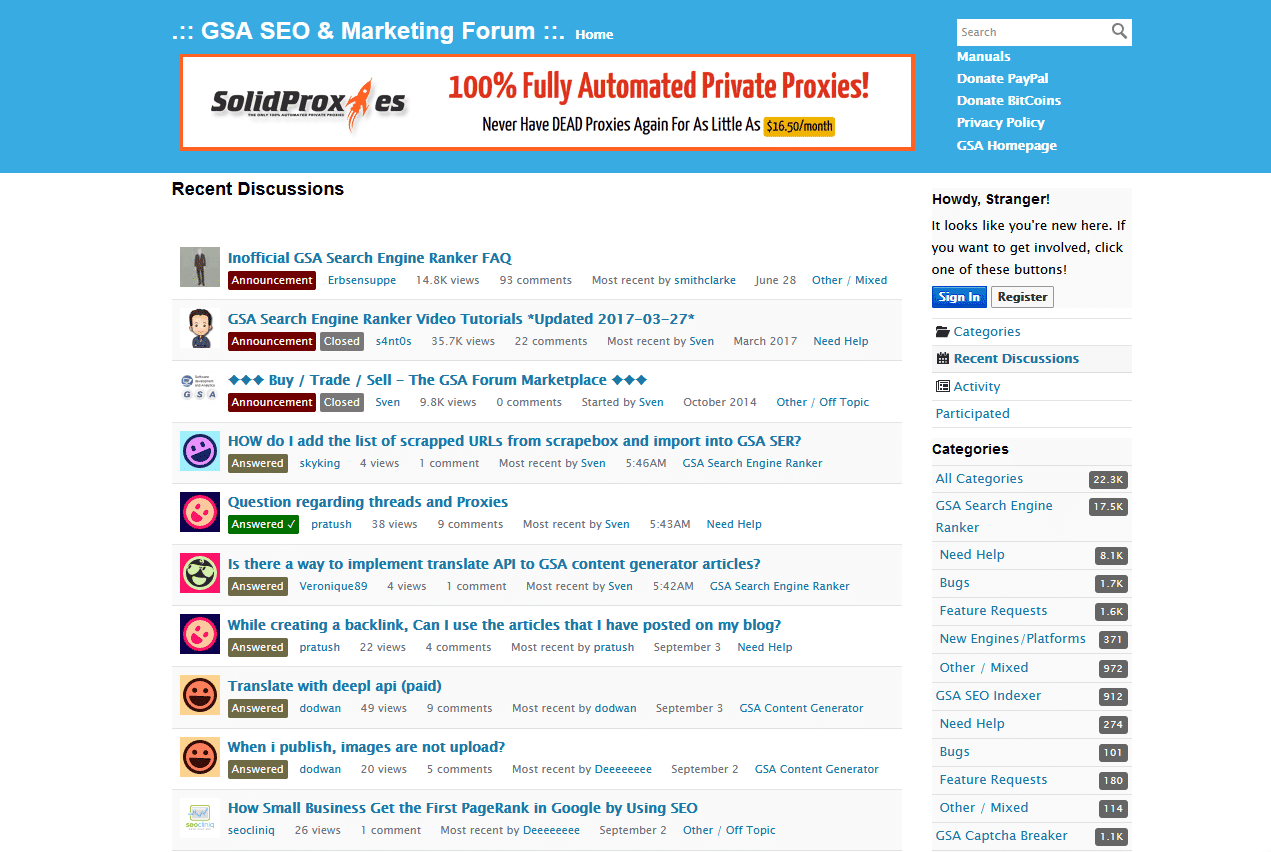

You have three primary avenues. The first is commercial: many marketplaces offer verified GSA SER link lists tailored to different campaign goals, from parasite SEO to pure indexation. The second is self-scraping, using the software's own footprint analysis alongside custom search engine queries. The third is community sharing, though this carries the risk of widely duplicated footprints. Whichever path you choose, always import small batches first and let them run for a few hours to observe the error rates before committing them to your main project.

Integration into Your Workflow

Once you possess a reliable set of GSA SER link lists, you can load them into the project options under the appropriate engine tabs. The tool will then prioritize these targets over any random crawling, leading to faster build cycles and a higher submission-to-verified ratio. Remember to pair your lists with corresponding content spintax and carefully configured captcha solvers, because a brilliant target URL is useless if the submission step fails repeatedly.

The Role of Tiered Linking

GSA SER link lists are not reserved exclusively for money sites. In fact, their most popular application is on tier 2 and tier 3 properties. A curated list of indexer URLs helps Google discover your first-tier backlinks faster. Massively powerful lists filled with comment and trackback targets can bombard a buffer page, pushing sheer authority up the chain without ever touching your main domain. This layered approach protects your brand while still harnessing the brute force that well-organized GSA SER link lists can provide.

Avoiding the Common Pitfalls

Blindly importing enormous link lists is the fastest way to burn through proxies and raise red flags. Always tune the thread count, delay between submissions, and domain-level limitations. If your GSA SER link lists contain too many identical platforms, the tool will replicate patterns that algorithms detect. Diversify not just the domains but the platform types, TLDs, and IP ranges. A healthy mix of .edu guestbooks, foreign forums, and obscure CMS footprints creates the entropy that looks natural to a crawler.

Moving Forward with Confidence

The market for GSA SER link lists continues to evolve as search engines get smarter. Today, the emphasis lies on relevance, trusted top-level domains, and links embedded within readable content. By treating your target collection as a living asset that requires constant grooming, you turn the software from a blunt instrument into a surgical tool. Start small, verify relentlessly, and expand only when your success rate proves the integrity of your chosen GSA SER link lists.

read more